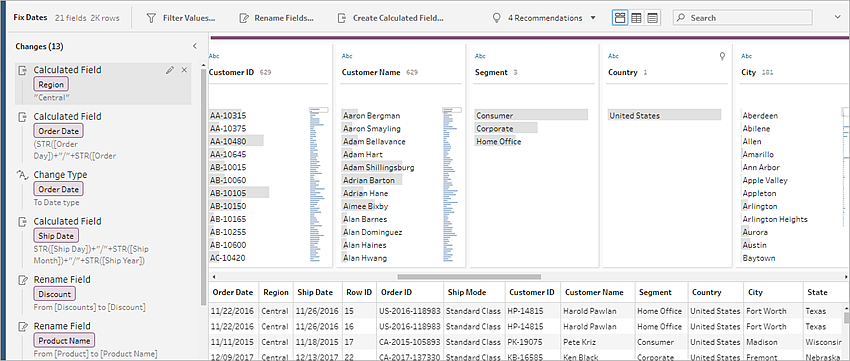

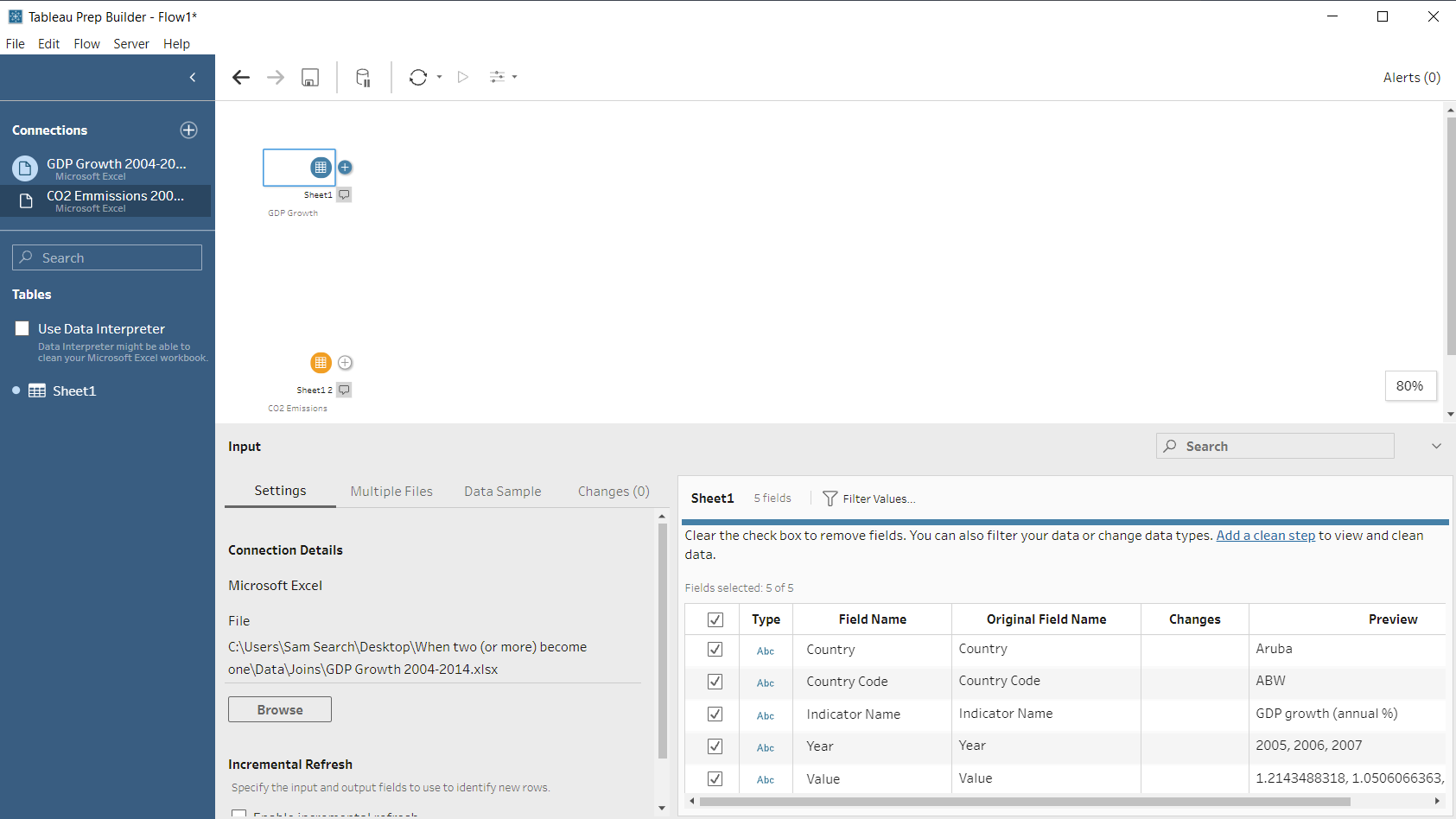

We’ll use Tableau Prep to address all of the issues and create a clean dataset for other people to use. That data is not perfectly clean, and some of the structures aren’t right. We will also create a calculation in Prep to normalize sales by state and year for the population in each state. The datasets are small, but every kind of data transformation step that Tableau Prep provides will have to be utilized to prepare the data for analysis in Tableau Desktop. There are also inconsistencies within specific fields that will have to be cleaned. The Census data provides population estimates for each state for corresponding years:īecause the world isn’t perfect, we will have to deal with data quality issues in these files, different aggregations of the data, union different files, join files, pivot the data and re-aggregate the data. This data could have been in a text file or a database: In this spreadsheet, I’ve separated each year’s sale into its own worksheet.

The data used in this example comes from two different spreadsheets: one that contains four (4) sales worksheets and one (1) Regional Manager worksheet, and another spreadsheet containing the census data.Įxperienced Tableau Desktop users should be familiar with the Superstore dataset. These will be joined with a population dataset from the Census, enabling us to normalize sales for population for each state that had sales. I’m going to create a workflow that will combine four tables containing annual sales data and a single dimension table that will be joined to provide regional manager names. In the example I’m drawing upon for this series, I’m using a version of Superstore data I created, along with public data from the Census Bureau. Understanding the basic data structure and size, as well as the granularity of different sources, helps you plan the flow in your mind before you get into the detailed challenges that the transformation of the raw data sources poses. Understanding the Basics of Your Data Sources

How frequently will you be updating the data?.Do you know of any data quality issues?.Are you familiar with the data structures?.How large are the files? Record counts? Rows/columns?.What kind of source are you connecting to?.In this series, Tableau Zen Master Dan Murray takes a closer look at the Tableau Prep Builder and Conductor tools, their power and scope and how they stack up against other ETL tools.īefore you begin building a workflow using Tableau Prep, it’s helpful to know a little bit about the data source(s) you need to connect to.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed